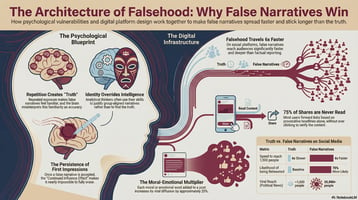

Toxic narratives spread faster than the truth. By the time we hear about them- the damage is...

Cognitive Warfare: The Pentagon's Race To Define The Narrative Battle

The U.S. has until the end of this month to formally define a contest its adversaries have been waging for decades.

The U.S. Department of Defense has until March 31, 2026 to do something it has never done: formally define cognitive warfare. A provision in the FY2026 National Defense Authorization Act directs the Secretary of Defense to define the term, assign organizational ownership, align it with existing doctrine, and assess the value of narrative intelligence.

The deadline arrives at a critical moment. NATO allies, adversaries, and academics have spent half a decade building frameworks the Pentagon has yet to adopt. China’s People Liberation Army (PLA - the Chinese military) has reorganized around cognitive domain operations. Russia has waged information-psychological campaigns across three continents. France published an offensive influence warfare doctrine in 2021. NATO’s Chief Scientist delivered a landmark report in December 2025 calling cognitive warfare a “strategic research challenge.”

However, the Pentagon’s own 2026 National Defense Strategy has yet to define the concept. At Vinesight, we build narrative intelligence for governments and global enterprises. We monitor how storylines form, spread, and shift across the information environment, from fringe channels to mainstream media, across 50+ languages. When the FY2026 NDAA directed the Pentagon to define cognitive warfare and assess the value of narrative intelligence, it put a formal name on the operational challenge our technology was designed to address. What follows is our analysis of what the deadline means, why it matters, and what the definition needs to get right.

What cognitive warfare actually means (and why nobody agrees)

Cognitive warfare has no single authoritative definition, which is precisely why Congress raised the issue. The concept sits at the intersection of information warfare, psychological operations, neuroscience, and emerging technology, but is not reducible to any of them. The core claim: the human mind itself has become a domain of warfare, contested as deliberately as land, sea, air, space, and cyberspace.

NATO’s Allied Command Transformation published the most operationally mature definition in its 2023 Cognitive Warfare Exploratory Concept: activities conducted in synchronization with other instruments of power, to affect attitudes and behavior by influencing, protecting, or disrupting individual and group cognition. François du Cluzel, the retired French officer who authored the foundational 2020 NATO ACT Innovation Hub study, was more direct: Cognitive warfare is the art of using technologies to alter the cognition of human targets, most often without their knowledge or consent.

The critical distinction: information warfare seeks to control the flow of data. Psychological operations target what people think about a specific subject. Cognitive warfare targets how people think: the neurological and social processes of perception, reasoning, and decision-making.

A peer-reviewed conceptual analysis published in Frontiers in Big Data notes that 94% of scholarly literature on cognitive warfare has emerged since 2021, underscoring how rapidly the field has crystallized.

Adversaries have been doing this for decades under different names

Russia: The concept of Aktivnye meropriyátiya (Active Measures) best defines the broader Russian view of cognitive warfare. In the Russian doctrine, from the Soviet Union to today, cognitive warfare is part of a continuum of operations ranging from sabotage, assassination, to influence operations, and blackmail. The concept also hints at the fact that those measures are constant, acting as a “white noise”: Imperceptible but always present. Moscow views cognitive warfare not as an additional tool, but as a way to secure objectives when physical tools of coercions fall short. For instance, some of Russia’s most recent effort aims to convince adversaries that Ukraine’s defeat is inevitable, despite Moscow’s own failure to take control of Ukraine after four years of fighting.

Beyond tactical goals, such as shaping the opinion of adversaries, active measures also acts as a broader way to shape the cognitive landscape. Russia largely differs from other actors because it doesn’t focus solely on pinpointed information operations, but aims to shape the narrative field in the longer term. Instead of simply seeking to stir the public towards one outcome, Russian cognitive warfare aims to undermine overall judgement, demobilize and demoralize the public in a way that makes it easier to control. Colonel Sergey Komov, an influential Russian intelligence expert, catalogued the methods: distraction, overloading with contradictory information, paralysis through perceived threats, exhaustion, division, and pacification through false security.

Russia operationalized this through “information-psychological operations,” codified in the 2000 Information Security Doctrine and sharpened in the 2016 revision. Russia views itself as engaged in permanent information confrontation with the West — there is no peace-war binary. Du Cluzel’s NATO paper (mentioned above) noted that Russia has prioritized cognitive warfare as a precursor to military phases.

China: cognitive domain operations. As early as 2003, the PLA codified the “Three Warfares” doctrine: psychological warfare, public opinion warfare, and legal warfare. The doctrine can be traced to a 1999 book Unrestricted Warfare by PLA Colonels Qiao Liang and Wang Xiangsui. The doctrine proposed that modern conflict would be fought through financial, cultural, legal, and information means, with the barrier between soldiers and civilians “fundamentally erased.”

Since the late 2010s, the PLA has evolved beyond the Three Warfares towards a more ambitious concept: cognitive domain operations (认知域作战), seeking what theorists call “mind superiority” (制脑权). In one article by the PLA Daily (military journal), the author defines Cognitive Domain Operations as “an all-weather offensive and defensive operation, covering all personnel, utilizing it throughout the entire process, shaping the entire domain, and involving the entire government.”

The goal is to influence the perceptions of civilians, soldiers and leaders alike, in a way that either stirs them towards desired outcomes, or weakens their ability to make decisions. China’s doctrine is similar to Russia’s in the sense that it views cognitive warfare as part of a continuum of operations. For instance, during Taiwan’s most recent elections, Beijing used both information operations (fake images aimed at delegitimizing one of the candidates), as well as military exercises and coercive maneuvers aimed at exaggerating the threat of a conflict, should the wrong candidate be chosen. The difference is that China’s doctrine views cognitive warfare as a tool to secure tactical objectives (alongside other methods), as opposed to the more ambitious Russian doctrine, which sees it as a way to secure strategic objectives that can’t be reached without it.

According to PLA doctrine published in the PLA Daily by the Academy of Military Science, militaries conduct cognitive domain operations on three levels:

- Cognitive deterrence: demonstrating overwhelming strength, paralyzing financial systems, or imposing sanctions to deliver psychological shock.

- Cognitive offense: actively shaping the adversary's perception through targeted information operations.

- Cognitive defense: inoculating domestic populations against foreign influence.

What makes China's approach distinct is its scale, its institutional integration, and its embrace of algorithmic methods. PLA scholars affiliated with the Air Force Early Warning Academy have advocated for what they call "algorithmic cognitive warfare": using AI to empower each stage of a cognitive operation. They break into six phases: user profiling, attracting attention, suggesting a frame of reference, inducing a reaction, timely intervention, and supervising gratification. This is not propaganda as most people understand it. It is a precision-targeted, AI-driven influence pipeline designed to profile individuals, capture their attention, frame their interpretation, trigger a response, and then reinforce the desired behavior — all at machine speed and scale.

France and the EU built a first democratic framework for a democratic way of waging cognitive warfare

France has been the most doctrinally advanced Western nation on this front. In October 2021, Defense Minister Florence Parly publicly released the L2I doctrine, France’s military doctrine for influence warfare in cyberspace. It completed a three-pronged framework: defensive cyber (2018), offensive cyber (2019), and influence warfare (2021). Parly stated that “a false, manipulated, or subverted narrative is a weapon that, if used wisely, allows a competitor to win without fighting.”

France’s guiding maxim, articulated in General Thierry Burkhard’s (France’s military Chief of Staff at the time) Strategic Vision, is “win the war before the war.” David Pappalardo, writing in War on the Rocks, defined cognitive warfare from the French perspective as a multidisciplinary approach combining social sciences and new technologies to directly alter the mechanisms of understanding and decision-making — aiming to “hack the heuristics of the human brain.”

France’s doctrine is notable as an early attempt to define the limits of cognitive warfare when waged by a democracy. Russia's Aktivnye meropriyátiya has no public doctrine, no disclosed rules of engagement, no parliamentary oversight of specific operations. China's cognitive domain operations blur the line between military and civilian precisely by design, and operate within an authoritarian system where domestic information control and foreign influence campaigns are part of a single continuum.

By contrast, a democratic definition of cognitive warfare has to acknowledge certain limits, in multiple ways. As such the L2I operates under three explicit constraints that no authoritarian cognitive warfare doctrine imposes on itself:

- First, L2I operations explicitly exclude French national territory. The French military cannot conduct influence operations against its own citizens.

- Second, France pledges not to destabilize foreign electoral processes. The doctrine explicitly states the French military will not target another state's elections through information operations.

- Third, every L2I operation is subject to a number of rules whether in times of peace or war: In peacetime by the UN Charter and principles of non-interference, and during armed conflict by International Humanitarian Law principles of necessity, proportionality, distinction, and precaution.

Meanwhile, the European Union approaches the problem through its Foreign Information Manipulation and Interference (FIMI) framework. FIMI emphasizes manipulative behavior rather than content truthfulness. The conduct and patterns matter, regardless of whether specific claims are true or false. FIMI and cognitive warfare are complementary: FIMI is a governance framework for detecting and responding to foreign manipulation; cognitive warfare is the broader military concept targeting cognitive processes themselves.

The March 31 deadline and what comes next

The NDAA provision originates in Senate Report 119-39, where the Senate Armed Services Committee stated bluntly: “Despite multiple congressional actions, there remain ambiguities and challenges in core definitions relating to information warfare, with frequent conflation of terms.” The committee directed the Pentagon to define cognitive warfare, identify which organizations own it, and assess the value of narrative intelligence.

The challenge is institutional. No single Pentagon entity currently owns cognitive warfare. Equities are fragmented across the Joint Staff, USSOCOM, USCYBERCOM, the intelligence community, Public Affairs, and service components. The FY2026 NDAA already includes $44.27 million for Cognitive Electromagnetic Warfare under Air Force R&D — but without doctrinal coherence, the money risks being spent without strategic direction.

Three things stand out from the research.

- First, cognitive warfare is genuinely distinct from its predecessors. It targets the process of cognition, not merely the content of thought. The neuroscience dimension, the integration of AI, and the continuous nature of the battlespace mark a qualitative shift from Cold War psychological operations.

- Second, technological acceleration is outpacing institutional adaptation. LLM poisoning, generative AI, deepfakes and other new and emerging tools are moving from theoretical to operational faster than doctrine committees can convene. New technologies are used by adversaries as offensive weapons, and need to be in turn used as defensive ones.

- Third, the March 31 deadline represents a strategic inflection point. A Pentagon definition could catalyze an institutional realignment that gives the United States the tool to win the cognitive war.

- Fourth, the private sector is already operationalizing what the Pentagon is defining. Narrative intelligence platforms today monitor fringe and mainstream environments across dozens of languages in near real-time, clustering conversations into storylines, mapping influence networks, and scoring virality before narratives reach critical mass. The doctrinal gap isn't a technology gap. The tools exist. What's missing is the institutional framework to deploy them. If the March 31 definition does what Congress intended, it won't just create bureaucratic clarity. It will unlock the ability to integrate commercial narrative intelligence into the cognitive defense stack, just as the defense establishment once integrated commercial satellite imagery into the intelligence cycle.

From doctrine to operations: the narrative intelligence layer

The Pentagon's definitional exercise matters because definitions create requirements, and requirements create budgets. But the cognitive domain won't wait for doctrine to mature. Adversaries are already operating at machine speed, running what PLA scholars describe as algorithmic cognitive warfare: profiling, framing, triggering, reinforcing, all at scale.

Defending against this requires a capability that will have a formal place in the U.S. defense architecture: persistent narrative intelligence. Not social listening, which counts mentions. Not sentiment analysis, which measures mood. Narrative intelligence maps the storylines themselves across the full information environment, from fringe channels to mainstream media, across languages and formats, distinguishing organic conversation from coordinated campaigns in real time.

This capability already exists in the commercial sector, deployed by governments and enterprises that couldn’t afford to wait for doctrinal committees to convene.

Vinesight moves beyond fragment-level detection to map the full narrative landscape across platforms and languages, showing governments not just what's being said, but which narratives are actually reshaping how populations think about their mission, turning information environment monitoring into actionable strategic understanding.

Subscribe Here!

About Vinesight

Vinesight has developed an AI-driven platform that monitors emerging social narratives, and identifies, analyzes, and responds to toxic attacks targeting brands, public sector institutions, and causes. We work with the entities that are at-risk for such attacks, including, the world's largest pharmaceutical companies, and the world's most prominent financial firms. Vinesight empowers brands, campaigns, and organizations to protect their narratives and brand, while ensuring that authenticity prevails in the digital space.

Interested in learning how your brand can leverage emerging narrative and early attack detection ?

.png?width=544&height=70&name=Layer_1%20(5).png)

.png?height=200&name=shutterstock_2467403207%201%20(5).png)

.png?height=200&name=Untitled%20design%20(14).png)